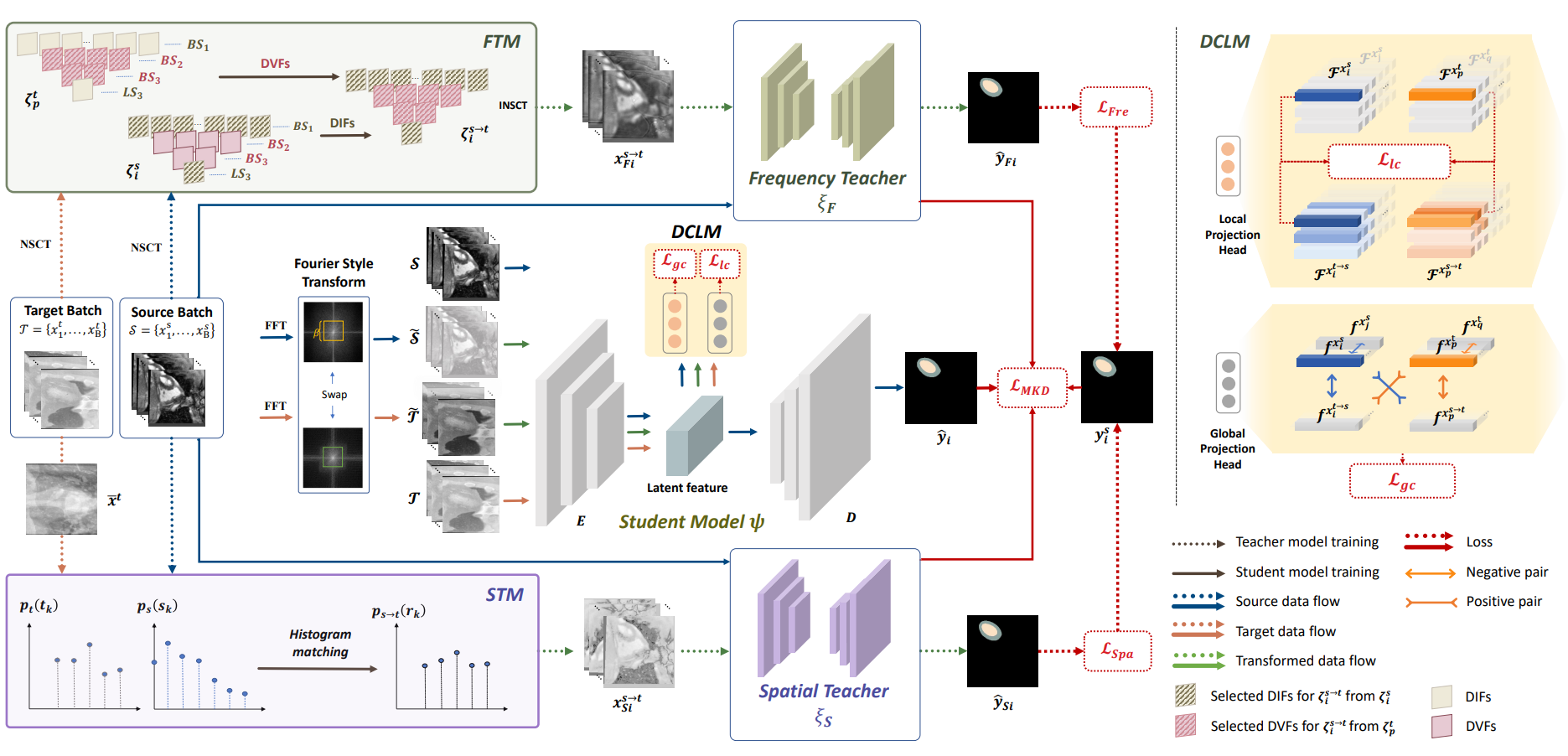

A Structure-aware Framework of Unsupervised Cross-Modality Domain Adaptation via Frequency and Spatial Knowledge Distillation

Shaolei Liu, Siqi Yin, Linhao Qu, Manning Wang†, Zhijian Song†

IEEE Transactions on Medical Imaging (IF = 10.6)

Unsupervised domain adaptation (UDA) aims to train a model on a labeled source domain and adapt it to an unlabeled target domain. In medical image segmentation field, most existing UDA methods rely on adversarial learning to address the domain gap between different image modalities. However, this process is complicated and inefficient. In this paper, we propose a simple yet effective UDA method based on both frequency and spatial domain transfer under a multi-teacher distillation framework. In the frequency domain, we introduce non-subsampled contourlet transform for identifying domain-invariant and domain-variant frequency components (DIFs and DVFs) and replace the DVFs of the source domain images with those of the target domain images while keeping the DIFs unchanged to narrow the domain gap. In the spatial domain, we propose a batch momentum update-based histogram matching strategy to minimize the domain-variant image style bias. Additionally, we further propose a dual contrastive learning module at both image and pixel levels to learn structure-related information. Our proposed method outperforms state-of-the-art methods on two cross-modality medical image segmentation datasets (cardiac and abdominal). Codes are available at https://github.com/slliuEric/FSUDA.